-

相机阵列是获取空间中目标光场信息的重要手段, 采用大规模密集相机阵列获取高角度分辨率光场的方法增加了采样难度和设备成本, 同时产生的大量数据的同步和传输需求也限制了光场采样规模. 为了实现稀疏光场采样的稠密重建, 本文基于稀疏光场数据, 分析同一场景多视角图像的空间、角度信息的关联性和冗余性, 建立有效的光场字典学习和稀疏编码数学模型, 并根据稀疏编码元素间的约束关系, 建立虚拟角度图像稀疏编码恢复模型, 提出变换域稀疏编码恢复方法, 并结合多场景稠密重建实验, 验证提出方法的有效性. 实验结果表明, 本文方法能够对场景中的遮挡、阴影以及复杂的光影变化信息进行高质量恢复, 可以用于复杂场景的稀疏光场稠密重建. 本研究实现了线性采集稀疏光场的稠密重建, 未来将针对非线性采集稀疏光场的稠密重建进行研究, 以推进光场成像在实际工程中的应用.The camera array is an important tool to obtain the light field of target in space. The method of obtaining high angular resolution light field by a large-scaled dense camera array increases the difficulty of sampling and the equipment cost. At the same time, the demand for synchronization and transmission of a large number of data also limits the sampling rate of light field. In order to complete the dense reconstruction of sparse sampling of light field, we analyze the correlation and redundancy of multi-view images in the same scene based on sparse light field data, then establish an effective mathematical model of light field dictionary learning and sparse coding. The trained light field atoms can sparsely express the local spatial-angular consistency of light field, and the four-dimensional (4D) light field patches can be reconstructed from a two-dimensional (2D) local image patch centered around each pixel in the sensor. The global and local constraints of the four-dimensional light field are mapped into the low-dimensional space by the dictionary. These constraints are shown as the sparsity of each vector in the sparse representation domain, the constraints between the positions of non-zero elements and their values. According to the constraints among sparse encoding elements, we establish the sparse encoding recovering model of virtual angular image, and propose the sparse encoding recovering method in the transform domain. The atoms of light field in dictionary are screened and the patches of light field are represented linearly by the sparse representation matrix of the virtual angular image. In the end, the virtual angular images are constructed by image fusion after sparse inverse transform. According to multi-scene dense reconstruction experiments, the effectiveness of the proposed method is verified. The experimental results show that the proposed method can recover the occlusion, shadow and complex illumination in satisfying quality. That is to say, it can be used for dense reconstruction of sparse light field in complex scene. In our study, the dense reconstruction of linear sparse light field is achieved. In the future, the dense reconstruction of nonlinear sparse light field will be studied to promote the practical application of light field imaging.

-

Keywords:

- light field /

- dictionary learning /

- sparse coding /

- dense reconstruction

[1] Cao X, Zheng G, Li T T 2014 Opt. Express. 22 24081

Google Scholar

Google Scholar

[2] Schedl D C, Birklbauer C, Bimber O 2018 Comput. Vis. Image Und. 168 93

Google Scholar

Google Scholar

[3] Smolic A, Kauff P 2005 Proc. IEEE 93 98

Google Scholar

Google Scholar

[4] McMillan L, Bishop G 1995, Proceedings of the 22nd Annual Conference on Computer Graphics and Interactive Techniques Los Angeles, USA, August 6−11, 1995 p39

[5] Fehn C 2004 The International Society for Optical Engineering Bellingham, USA, December 30, 2004 p93

[6] Xu Z, Bi S, Sunkavalli K, Hadap S, Su H, Ramamoorthi R 2019 ACM T. Graphic 38 76

[7] Wang C, Liu X F, Yu W K, Yao X R, Zheng F, Dong Q, Lan R M, Sun Z B, Zhai G J, Zhao Q 2017 Chin. Phys. Lett. 34 104203

Google Scholar

Google Scholar

[8] Zhang L, Tam W J 2005 IEEE Trans. Broadcast. 51 191

Google Scholar

Google Scholar

[9] Chen W, Chang Y, Lin S, Ding L, Chen L 2005 IEEE Conference on Multimedia and Expo Amsterdam, The Netherlands, July 6−8, 2005 p1314

[10] Jung K H, Park Y K, Kim J K, Lee H, Kim J 2008 3 DTV Conference: The True Vision-Capture, Transmission and Display of 3D Video Istanbul, Turkey, May 28−30, 2008 p237

[11] Hosseini Kamal M, Heshmat B, Raskar R, Vandergheynst P, Wetzstein G 2016 Comput. Vis. Image Und. 145 172

Google Scholar

Google Scholar

[12] Levoy M, Hanrahan P 1996 Proceedings of the 23rd Annual Conference on Computer Graphics and Interactive Techniques New York, USA, August 4−9, 1996 p31

[13] Levoy M 2006 Computer 39 46

Google Scholar

Google Scholar

[14] Donoho D L 2006 IEEE T. Inform. Theory. 52 1289

Google Scholar

Google Scholar

[15] Park J Y, Wakin M B 2012 Eurasip J. Adv. Sig. Pr. 2012 37

[16] Zhu B, Liu J Z, Cauley S F, Rosen B R, Rosen M S 2018 Nature 555 487

Google Scholar

Google Scholar

[17] Ophir B, Lustig M, Elad M 2011 IEEE J. Sel. Top. Signal Process. 5 1014

Google Scholar

Google Scholar

[18] Marwah K, Wetzstein G, Bando Y, Raskar R 2013 ACM T. Graphic. 32 46

[19] Marwah K, Wetzstein G, Veeraraghavan A, Raskar R 2012 ACM SIGGRAPH 2012 Talks Los Angeles, USA, August 5−9, 2012 p42

[20] Tenenbaum J B, Silva V D, Langford J C 2000 Science 290 2319

Google Scholar

Google Scholar

[21] Honauer K, Johannsen O, Kondermann D, Glodluecke B 2016 Asian Conference on Computer Vision Taipei, China, November 20−24, 2016 p19

[22] Bottou L, Bousquet O 2008 Advances in Neural Information Processing Systems 20, Proceedings of the Twenty-First Annual Conference on Neural Information Processing Systems Whistler, Canada, December 3−6, 2007 p161

[23] Mairal J, Bach F, Ponce J, Sapiro G 2009 Proceedings of the 26th Annual International Conference on Machine Learning Montreal, Canada, June 14–18, 2009 p689

[24] Flynn J, Neulander I, Philbin J, Snavely N 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Las Vegas, USA, June 27−30, 2016 p5515

-

图 3 重建图像质量曲线图 (a) pixels为256 × 256, 不同稀疏度重建性能曲线图; (b) pixels为512 × 512, 不同稀疏度重建性能曲线图; (c) 不同分辨率重建图像的PSNR曲线图; (d) pixels为256 × 256, 不同冗余度重建性能曲线图

Fig. 3. Performance of reconstructed image: (a) Performance in sparsity, pixels = 256 × 256; (b) performance in sparsity, pixels = 512 × 512; (c) PSNR in different resolution; (d) performance in redundancy, pixels = 256 × 256.

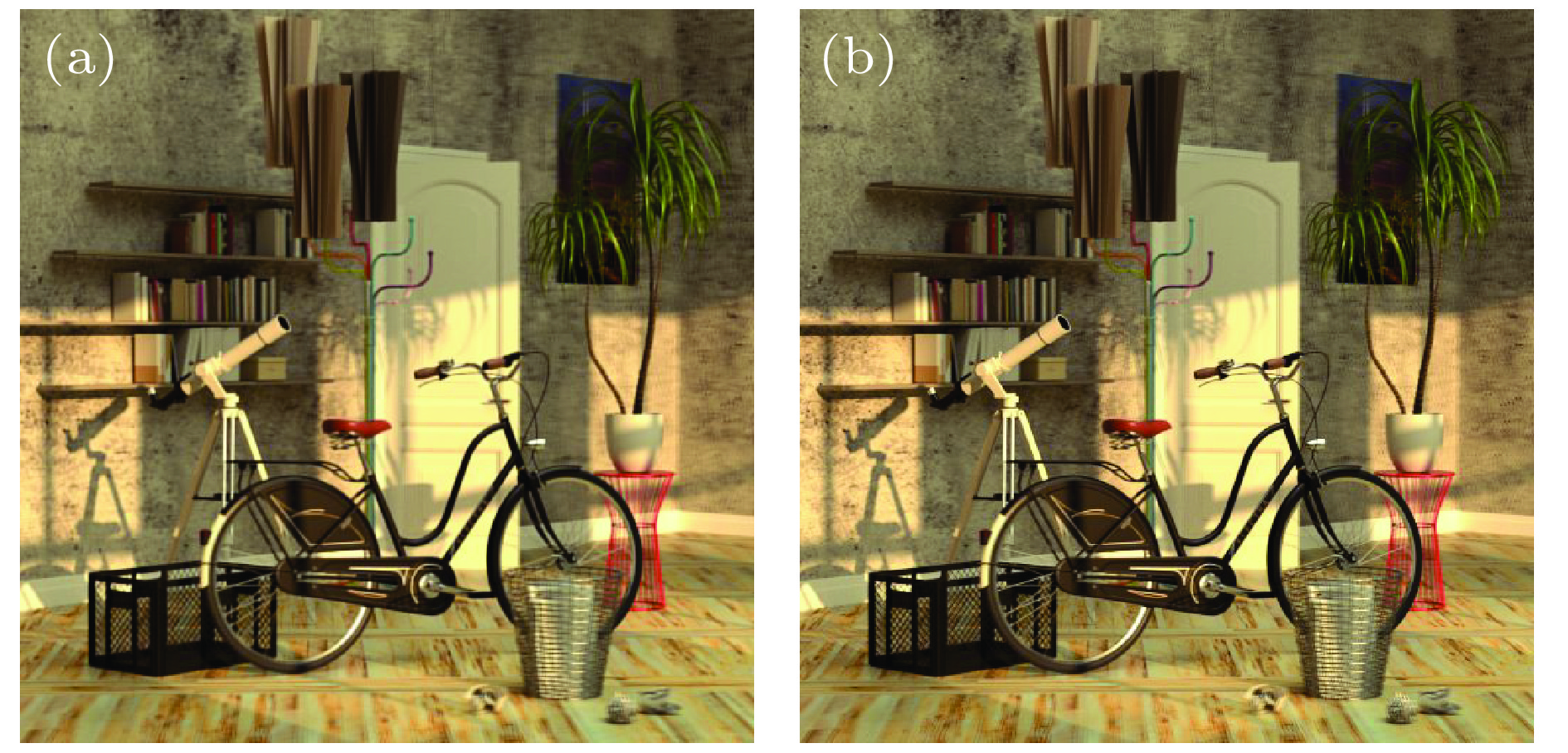

图 5 包含遮挡目标的稠密光场恢复 (a) 稠密光场; (b), (e) 参考图像; (c), (d) 恢复的view 2, view 5虚拟角度图像; (g), (h)目标图像; (f), (i) 残差图

Fig. 5. Dense reconstruction of light field with occluded targets: (a) Dense light field; (b), (e) reference images; (c), (d) reconstructed virtual images of view 2 and view 5; (g), (h) target images; (f), (i) residual images.

表 1 不同稀疏度、冗余度重建图像质量指标

Table 1. Performance of image reconstruction in different sparsity and redundancy

Sparse

parameter (K),

Redundancy

parameter (N)MSE PSNR/dB SSIM Time/s K = 16, N = 256 54.4215 30.7731 0.8860 1266.08 K = 34, N = 1024 49.0044 31.2285 0.8865 14306.55 表 2 不同场景光场稠密重建结果

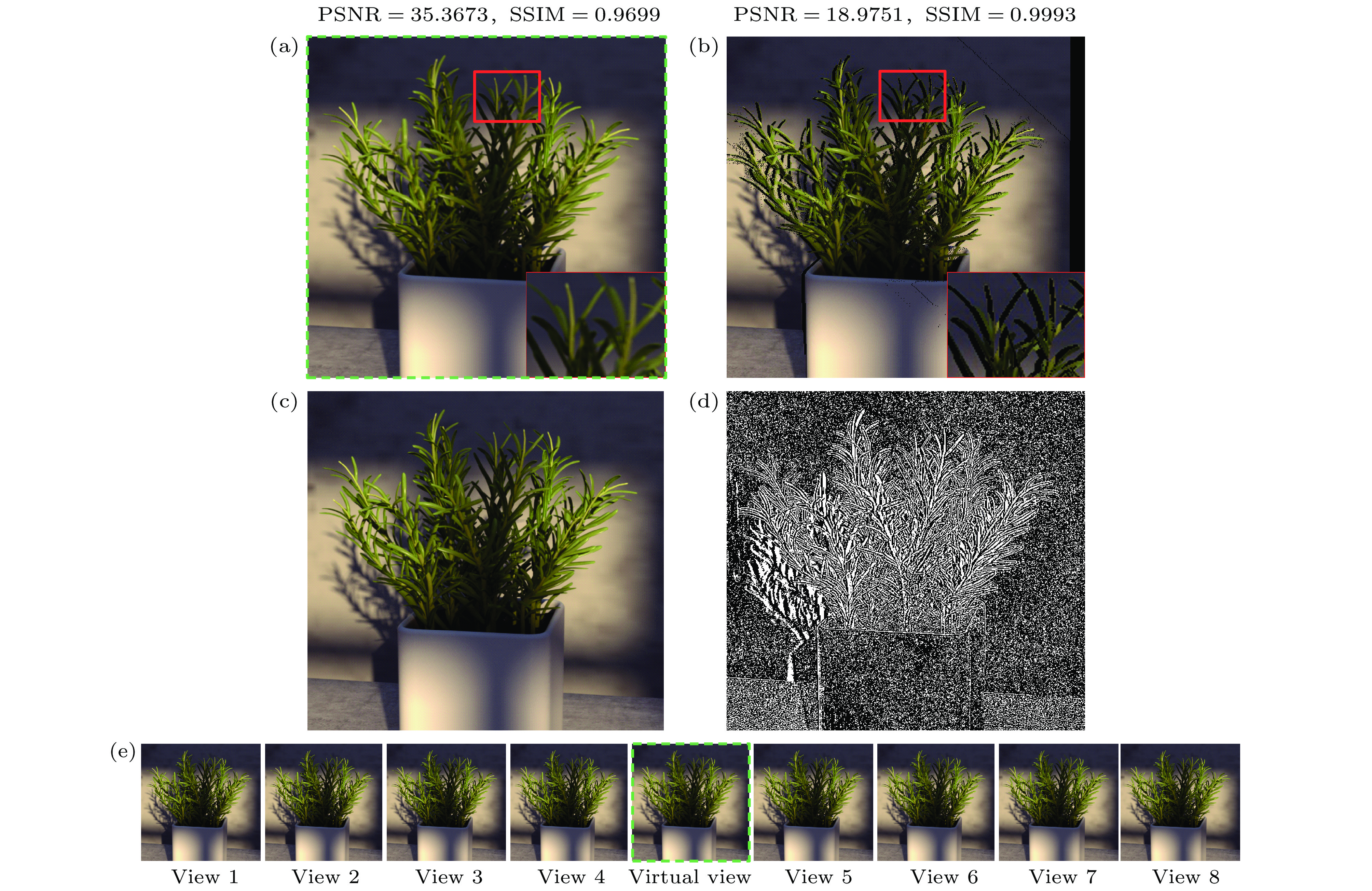

Table 2. Dense reconstruction of light field in different scenes.

Sense Table Bicycle town Boardgames rosemary Vinyl bicycle* MSE 21.2124 54.4215 25.8005 53.9244 18.8950 22.4756 49.0044 PSNR/dB 34.8649 30.7731 34.0145 30.8129 35.3673 34.6137 31.2285 SSIM 0.9323 0.8860 0.9474 0.9341 0.9699 0.9421 0.8865 * 稀疏度K = 34, 冗余度N = 1024. -

[1] Cao X, Zheng G, Li T T 2014 Opt. Express. 22 24081

Google Scholar

Google Scholar

[2] Schedl D C, Birklbauer C, Bimber O 2018 Comput. Vis. Image Und. 168 93

Google Scholar

Google Scholar

[3] Smolic A, Kauff P 2005 Proc. IEEE 93 98

Google Scholar

Google Scholar

[4] McMillan L, Bishop G 1995, Proceedings of the 22nd Annual Conference on Computer Graphics and Interactive Techniques Los Angeles, USA, August 6−11, 1995 p39

[5] Fehn C 2004 The International Society for Optical Engineering Bellingham, USA, December 30, 2004 p93

[6] Xu Z, Bi S, Sunkavalli K, Hadap S, Su H, Ramamoorthi R 2019 ACM T. Graphic 38 76

[7] Wang C, Liu X F, Yu W K, Yao X R, Zheng F, Dong Q, Lan R M, Sun Z B, Zhai G J, Zhao Q 2017 Chin. Phys. Lett. 34 104203

Google Scholar

Google Scholar

[8] Zhang L, Tam W J 2005 IEEE Trans. Broadcast. 51 191

Google Scholar

Google Scholar

[9] Chen W, Chang Y, Lin S, Ding L, Chen L 2005 IEEE Conference on Multimedia and Expo Amsterdam, The Netherlands, July 6−8, 2005 p1314

[10] Jung K H, Park Y K, Kim J K, Lee H, Kim J 2008 3 DTV Conference: The True Vision-Capture, Transmission and Display of 3D Video Istanbul, Turkey, May 28−30, 2008 p237

[11] Hosseini Kamal M, Heshmat B, Raskar R, Vandergheynst P, Wetzstein G 2016 Comput. Vis. Image Und. 145 172

Google Scholar

Google Scholar

[12] Levoy M, Hanrahan P 1996 Proceedings of the 23rd Annual Conference on Computer Graphics and Interactive Techniques New York, USA, August 4−9, 1996 p31

[13] Levoy M 2006 Computer 39 46

Google Scholar

Google Scholar

[14] Donoho D L 2006 IEEE T. Inform. Theory. 52 1289

Google Scholar

Google Scholar

[15] Park J Y, Wakin M B 2012 Eurasip J. Adv. Sig. Pr. 2012 37

[16] Zhu B, Liu J Z, Cauley S F, Rosen B R, Rosen M S 2018 Nature 555 487

Google Scholar

Google Scholar

[17] Ophir B, Lustig M, Elad M 2011 IEEE J. Sel. Top. Signal Process. 5 1014

Google Scholar

Google Scholar

[18] Marwah K, Wetzstein G, Bando Y, Raskar R 2013 ACM T. Graphic. 32 46

[19] Marwah K, Wetzstein G, Veeraraghavan A, Raskar R 2012 ACM SIGGRAPH 2012 Talks Los Angeles, USA, August 5−9, 2012 p42

[20] Tenenbaum J B, Silva V D, Langford J C 2000 Science 290 2319

Google Scholar

Google Scholar

[21] Honauer K, Johannsen O, Kondermann D, Glodluecke B 2016 Asian Conference on Computer Vision Taipei, China, November 20−24, 2016 p19

[22] Bottou L, Bousquet O 2008 Advances in Neural Information Processing Systems 20, Proceedings of the Twenty-First Annual Conference on Neural Information Processing Systems Whistler, Canada, December 3−6, 2007 p161

[23] Mairal J, Bach F, Ponce J, Sapiro G 2009 Proceedings of the 26th Annual International Conference on Machine Learning Montreal, Canada, June 14–18, 2009 p689

[24] Flynn J, Neulander I, Philbin J, Snavely N 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Las Vegas, USA, June 27−30, 2016 p5515

计量

- 文章访问数: 11008

- PDF下载量: 113

- 被引次数: 0

下载:

下载: